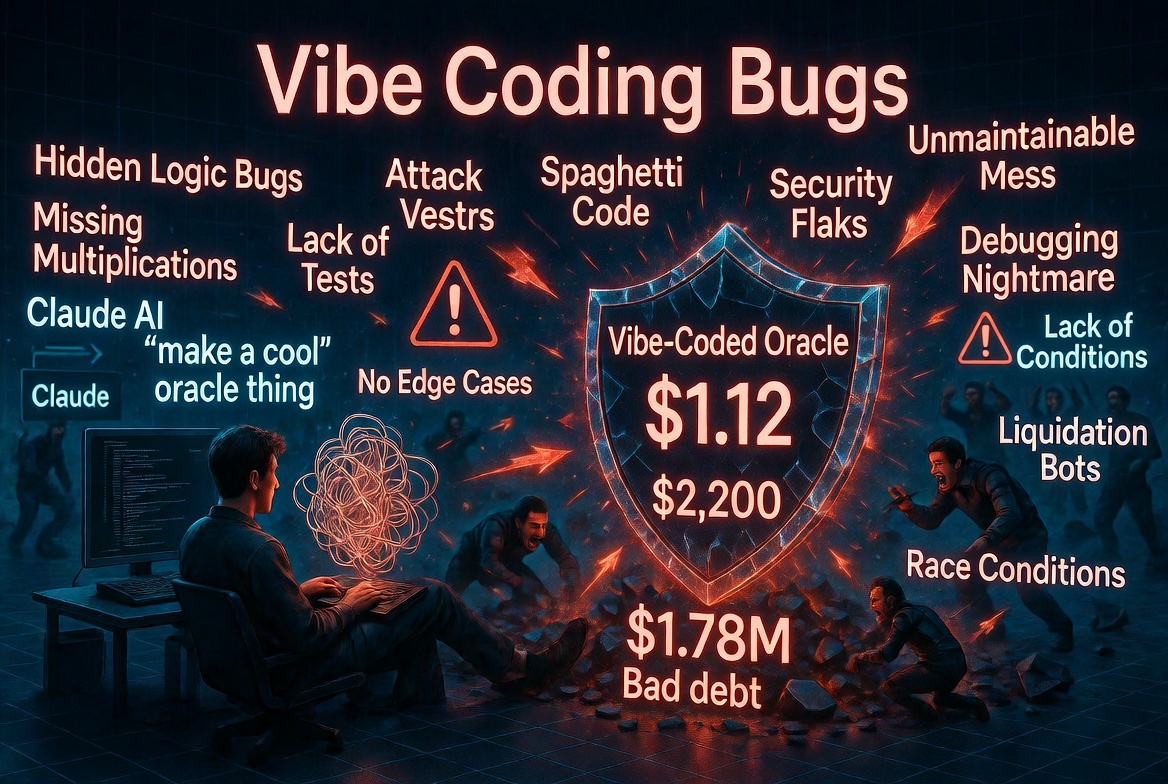

Vibe Coding Smart Contracts: What Could Go Wrong?

Vibe coding is everywhere. You describe what you want, the AI writes it, you ship it. For a landing page or a CRUD API, the downside of a bug is a broken form or a 500 error. Someone files an issue, you fix it, you redeploy.

Smart contracts do not work like that.

When a smart contract has a bug, there is no patch. There is no rollback. There is no customer support line. There is just the blockchain, immutable and indifferent, and whoever found the bug before you did.

We have now seen what happens when vibe coding meets DeFi, and the results are exactly what you would expect if you thought about it for thirty seconds.

The Evidence Is Piling Up

Moonwell Finance, February 2025: $1.78 million lost.

A governance proposal co-authored by Claude Opus 4.6 misconfigured a cbETH oracle. The cbETH/ETH exchange rate, roughly 1.12, was plugged directly into a slot expecting a cbETH/USD price, which should have been around $2,200. Liquidation bots noticed within minutes. Odin Scan flagged the exact vulnerability as Critical when we scanned the PR. The deployment did not.

A growing pattern, unnamed but real.

Post-mortems increasingly show commits with AI co-authorship messages. "Co-authored-by: GitHub Copilot." "Generated with Claude." The AI did not introduce the vulnerability in all cases. But it did write code that a developer accepted without fully understanding, and that gap is where bugs live.

Why AI Models Make This Kind of Mistake

AI coding assistants are next-token predictors. They produce code that looks correct based on patterns they have seen. They do not reason about invariants. They do not understand that the variable you named price needs to represent a specific unit, denominated in a specific currency, derived from a specific oracle composition.

They will write what you ask for. If you ask for "configure the cbETH oracle," they will write a configuration. If the oracle address you hand them returns ETH-denominated values and you expect USD-denominated values, the AI will not notice. It cannot notice. That semantic gap is invisible to it.

There are a few specific failure patterns that show up repeatedly:

1. Unit confusion. Prices in ETH vs USD. Amounts in wei vs ether. Timestamps in seconds vs milliseconds. AI models produce code that compiles cleanly and handles the type correctly while getting the semantics completely wrong.

2. Copy-paste oracle configuration. AI models will copy patterns from similar contracts they have seen in training. If those patterns use a simple price feed for ETH and you are configuring an LST like cbETH or wstETH, the AI might omit the composition step because it has seen simpler configurations more often.

3. Missing validation on user-controlled inputs. AI-generated code tends to skip edge case validation. It writes the happy path extremely well. Zero amounts, empty addresses, re-initialization attacks on upgradeable contracts: these are the cases AI models most commonly miss.

4. Overcomplicated access control. Ask an AI to implement access control and it will often produce something that looks thorough but has a gap. A function marked as internal that can be reached through a proxy. A modifier that can be bypassed during initialization. A role assignment in the constructor that an owner can later reassign arbitrarily.

5. Outdated patterns. AI models train on historical code. Solidity 0.8 arithmetic overflow protections, newer OpenZeppelin patterns, recent Anchor constraint systems: if the model's training data skews toward older examples, it will generate older patterns that may have known issues.

The Specific Risks by Chain

Solidity / EVM

The EVM ecosystem has the most AI-generated code in production because it has the most tooling. Copilot, Cursor, Claude, and GPT-4 all have solid Solidity completions. The risks are:

- Reentrancy via AI-generated withdrawal patterns that forget checks-effects-interactions

- Oracle configurations that miss LST/LRT price composition

- Upgradeable contract storage collisions when AI adds new state variables without understanding proxy storage layout

- Missing slippage checks on DEX integrations

Rust / Solana (Anchor)

Anchor's macro system gives a false sense of security. The macros handle a lot, but not everything. AI-generated Anchor code commonly:

- Omits

has_oneconstraints on accounts that should be validated - Skips signer checks on admin instructions

- Generates reinitialization vulnerabilities when the

initconstraint is missing on accounts that could be passed twice

CosmWasm

CosmWasm is the smallest AI training corpus of the three, which means AI completions are least reliable here. Common AI mistakes:

- Using

unchecked_addwhere checked arithmetic is required - Missing

addr_validateon user-supplied addresses - Unsaved storage: computing a value, forgetting to write it back to state

The Audit Does Not Fix This

Here is the part that is easy to miss.

Traditional audits happen once, before launch, on a snapshot of the code. After the audit, the code keeps changing. Governance proposals add new parameters. New collateral types get listed. Integrations with new protocols get added.

Each of those changes is a new attack surface. None of them go through the audit.

The Moonwell misconfiguration was introduced in a governance proposal, not in the core contracts that auditors reviewed. The audit would not have caught it even if it ran the day before.

What catches it is continuous scanning. Every PR, every proposal script, every deployment config, scanned automatically as part of the CI/CD pipeline.

What To Do If You Are Using AI to Write Smart Contracts

These are not theoretical suggestions. They are the specific gaps that cause real losses.

Validate oracle units explicitly. For every price feed, write a comment stating what unit it returns and what unit you expect. Write a test that asserts the value falls within a reasonable range. For LSTs, that range is always derived from the underlying asset price, not the exchange rate.

Test the unhappy paths. AI writes happy paths well. Write your own tests for zero amounts, empty addresses, maximum values, and re-initialization. If the AI does not generate these cases, add them manually.

Never deploy AI-generated upgrade scripts without a human reading every line. Upgrades, migrations, and governance proposals are where the most dangerous AI-generated bugs appear. They are also the least reviewed, because they look like "config" rather than "code."

Run a scanner on every PR. Not just the audit before launch. Every PR. Every commit. Every proposal.

The AI is not going to stop writing code. The code is not going to stop going on-chain. The only variable you control is whether you put a verification layer between the two.

Odin Scan integrates directly into your GitHub Actions pipeline. It scans every PR automatically, flags oracle misconfigurations, access control gaps, and unsafe arithmetic before they reach mainnet. Start your free trial and have it running in under five minutes.